The Jury Is Asking About Punitive Damages. Read into that.

This week in the Dispatch: A damages question from the jury signals where deliberations could be heading; the AI preemption fight is back and uglier than ever; and Meta's big Illinois spending spree backfires.

Welcome back to The Dispatch from The Tech Oversight Project, your weekly updates on all things tech accountability. Follow us on Twitter at @Tech_Oversight and @techoversight.bsky.social on Bluesky.

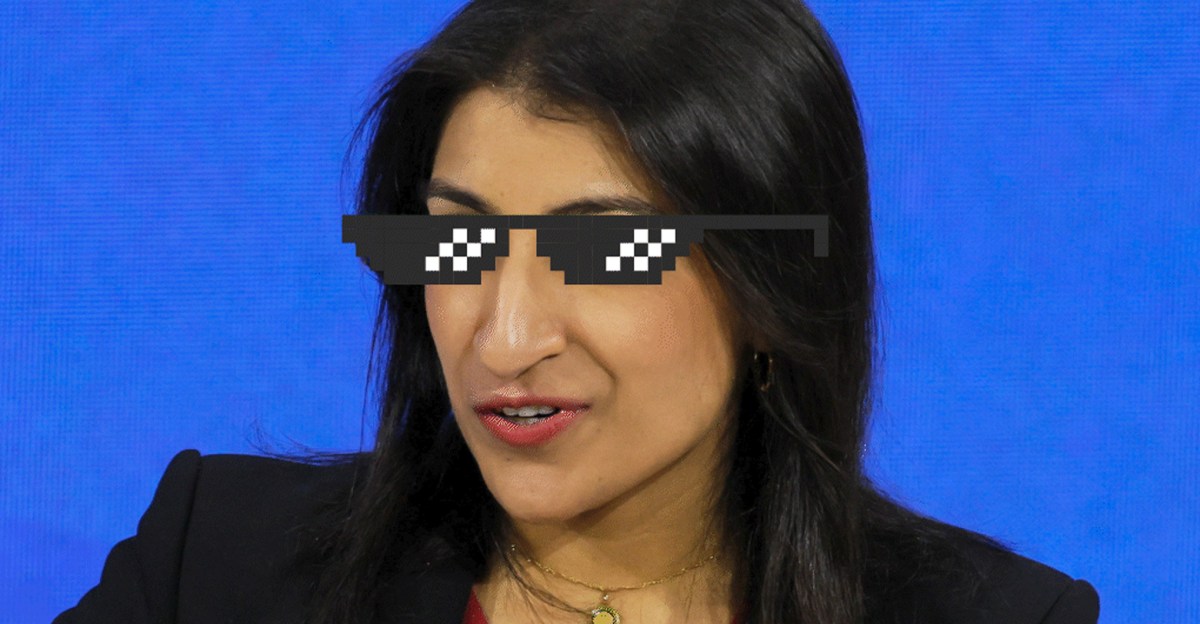

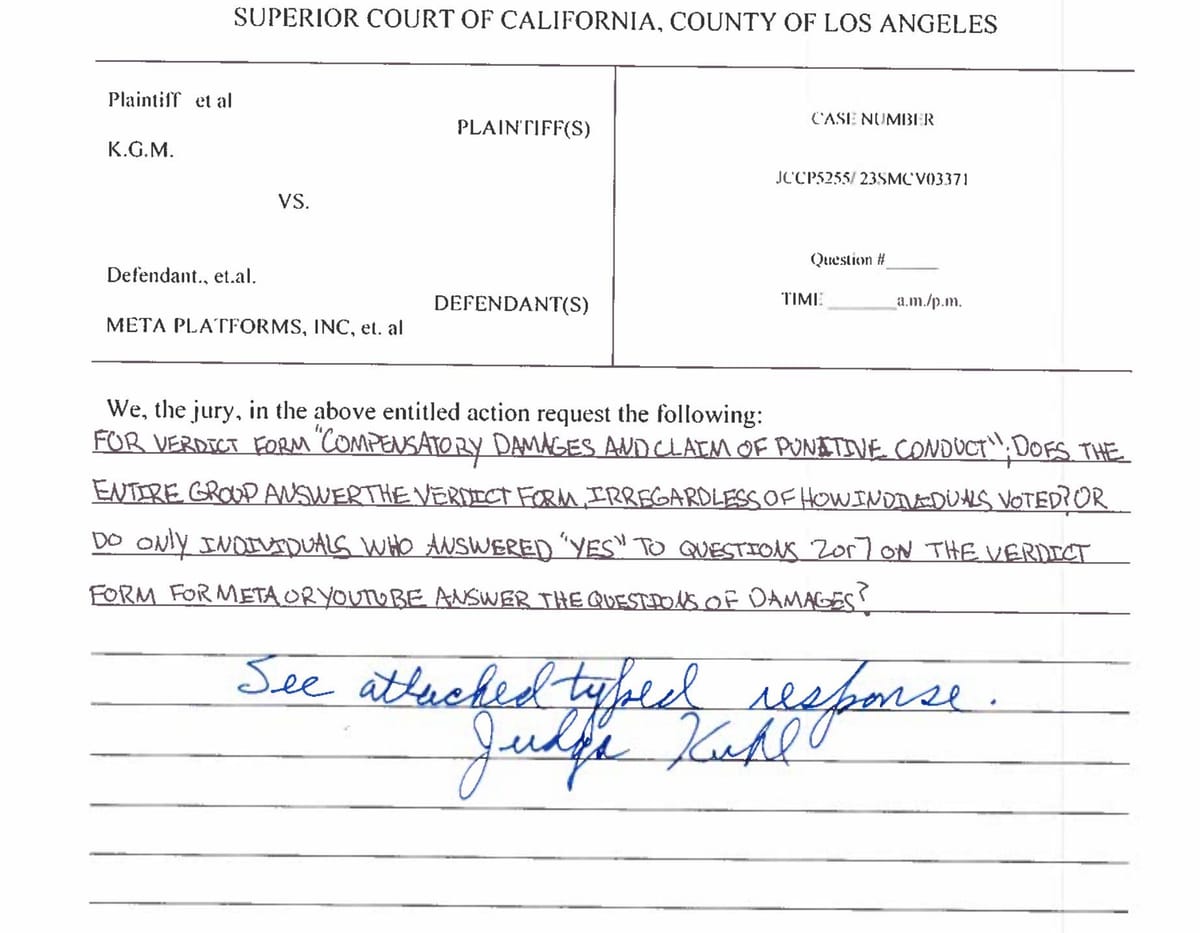

🍵 READING THE TEA LEAVES: Late Friday, the jury in the landmark social media addiction trial sent a note to Judge Kuhl asking for clarification on how to calculate compensatory and punitive damages – and if you know anything about how juries work, you know that’s probably a good sign.

As lead plaintiffs' attorney Mark Lanier put it on the courthouse steps: "You don't get there unless you've already decided that one or more defendants was negligent or failed to warn the way they should have warned – and that that was a substantial contributing factor to the damage done to Kaley."

In other words, the jury isn't debating guilt. They're debating consequences.

This is the first time Big Tech has ever been forced to answer for these harms in a court of law. And the pressure from this trial is already forcing Meta and Google to react. As Lanier noted, both companies have visibly ramped up their so-called safety features since testimony began – a fact he addressed directly: "In our view, that's absolutely targeted to handle the PR mess. Remember, we've seen the internal documents; we've shown them to this jury; that showed, at various times, so many of their safety features are really just plugs for PR purposes more than they are realistic fixes."

We won the moment these executives were forced to answer under oath. Every day a whistleblower spoke the truth, and every internal document that entered the public record was a victory – no matter the final verdict.

🦠 AI PREEMPTION SEEKS HOST: The fight over AI regulation is back on in Washington, exposing a rift within Republican ranks. GOP leaders in the House and Senate, along with Donald Trump’s AI czar David Sacks, are once again exploring ways to preempt state AI laws entirely — potentially by attaching language to a broader reconciliation deal, or by packaging the provision with more popular internet legislation like kids online safety protections.

We stopped this nonsense in its tracks last July with a 99-1 vote in the Senate, because it would be an affront to people living in states with higher safety standards. Why should we accept a meaningless federal standard or trade progress for AI amnesty for Big Tech? States from California to Colorado to New York are far ahead of the federal government in establishing real safeguards to protect their citizens from the harms of unregulated and untested AI products. And in almost every state, new AI regulation bills have been introduced — more than 1,500 at last count.

Preempting state laws would tear down those safeguards and leave us exposed to an industry whose products have led to death and injury, lost jobs, legal and medical mishaps, and more. But the politics of AI are still unsettled, and Trump and his friends are splitting the GOP coalition. Republican governors and Republican AGs (along with an overwhelming share of Democrats), along with 280 state legislators from both parties, are on record as strenuously opposing federal preemption.

CONSERVATIVES FIGHT BIG AI: Today, nine conservative groups put forward a new organization to fight dangerous AI products and Big Tech's AI amnesty – a persistent issue backed by a broad, bipartisan coalition. The Alliance for a Better Future, supported by Heritage, American Compass, Institute for Family Studies, and others, launched “to prioritize the interests of children, workers, and creators” on Capitol Hill and in state capitals. Watch this space, and learn more here.

BIG TECH GETS ILL: Tuesday's Illinois primary was supposed to showcase what Big Tech money can do in a down-ballot election. Super PAC spending flooded races up and down the ballot, with Meta leading the charge in backing candidates aligned with its interests.

The results? A near-total rebuke. Voters rejected most candidates backed by AI and crypto-aligned spending. Meta's PAC backed four statehouse candidates, and only one won – a “dismal showing.” Candidates running on stronger oversight and accountability held their ground. As the spending totals became clear, the story wasn't Big Tech's political muscle. It was the lack thereof.

TOP’s Sacha Haworth: “As voters across the country reject Big Tech, its big-money PACs’ support is proving to be a stain rather than a boost to candidates. State governors, lawmakers and AGs are holding the industry’s feet to the fire on youth safety failures, and repercussions are showing up at the ballot box. 2026 candidates on both sides of the aisle would be wise to heed these warnings and support strong tech oversight, accountability, and reform.”

🦵R.I.P. LEGS: Meta spent billions building the “future,” and now it’s getting unplugged — or so the company said, in an announcement last Tuesday that its VR “metaverse” product would be shut down.

The announcement was met with widespread mockery, to the point that by Thursday, the company backtracked, promising that the metaverse would remain online for now to serve its dozens, or possibly hundreds, of users worldwide.

Jokes aside: despite the Metaverse’s failure to take off, it was characterized by the same profits-over-safety design decisions that plague all of Meta’s products. It was the subject of whistleblower testimony to a Senate committee last year by ex-Meta researchers who said the company was aware that children were being harmed in VR, but did nothing. One added: “I wish I could tell you the percentage of children in VR experiencing these harms, but Meta would not allow me to conduct that research.”